Introduction to Subject – What is it about?

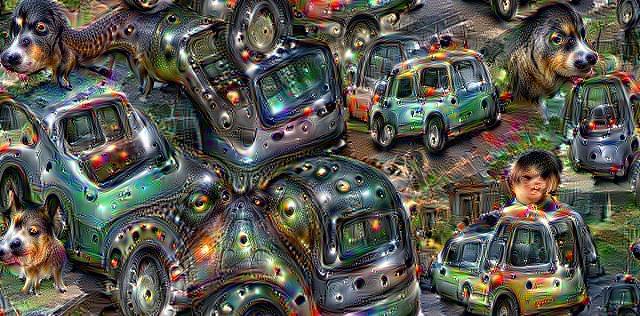

My subject for this assignment is the earth and is about the impact of global technology, particularly the increasing dependency on computerisation in assisted learning and decision making. In making my series of ten images, I created five ‘seed’ photographs. Each one is an aerial view of a location I have taken/made a picture as part of the EYV module, one from each assignment. The images were captured using the Google Earth platform (and will be attributed on my blog under ‘non commercial fair-use’ guidelines). Each photograph is a unique view of the Earth’s surface and, while they appear homogeneous in appearance, each image contains new information by showing differences in landscape features. Using images captured from Google Earth was a deliberate decision to devolve part of the responsibility of image creation as part of the creative process. I interpreted the assignment brief as requiring different interpretation of the same subject, so as a continuation of the theme I decided to devolve this process to a computer algorithm. For the second series of five photographs, I used a computer program called DeepDream to visually reinterpret the five ‘seed’ images. I wanted the results of this process to appeal and repel in equal measure. The appeal of the resultant images is representative of the appeal of technology in general, the repulsion of the photographs (are they still photographs?) reflects concerns over the role that technology plays in society.

Influence & Research

Part of the influence for this assignment was the research into Thomas Ruff in part one of the course. I enjoyed the study, but I felt slightly wary of the fact he didn’t take the images himself. I wanted to do something deliberately challenging in this assignment; which to me was not using a camera, not having my usual control of the ‘creative process’, and producing images which challenge my view of ‘photography’. A “simple” interpretation of the brief would have been to take still life images of a single subject changing the viewing angle and distance. I found a simple approach challenging, as I couldn’t think of a way to make it creatively appealing, which led me to a wider interpretation of the brief into something ‘difficult’ and challenging with, hopefully, a more creative outcome.

Part of the appeal of this project came from my background in data science and understanding of machine learning, the process in this assignment provided an opportunity to blend two personal interests.

My research also led me to view the work of Benjamin Grant and his Overview project in which he produces high definition satellite images of different geometric landscapes.

Process

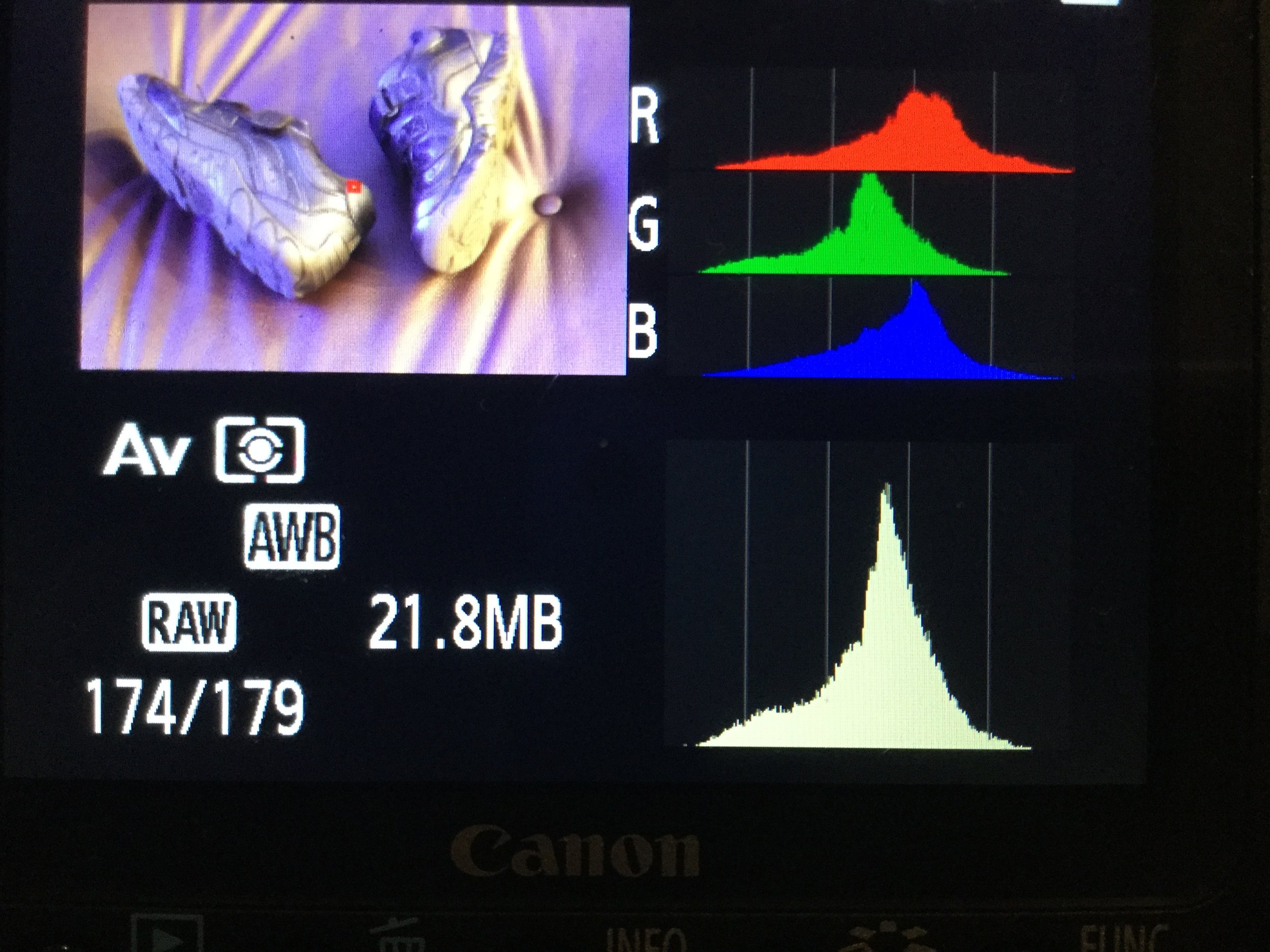

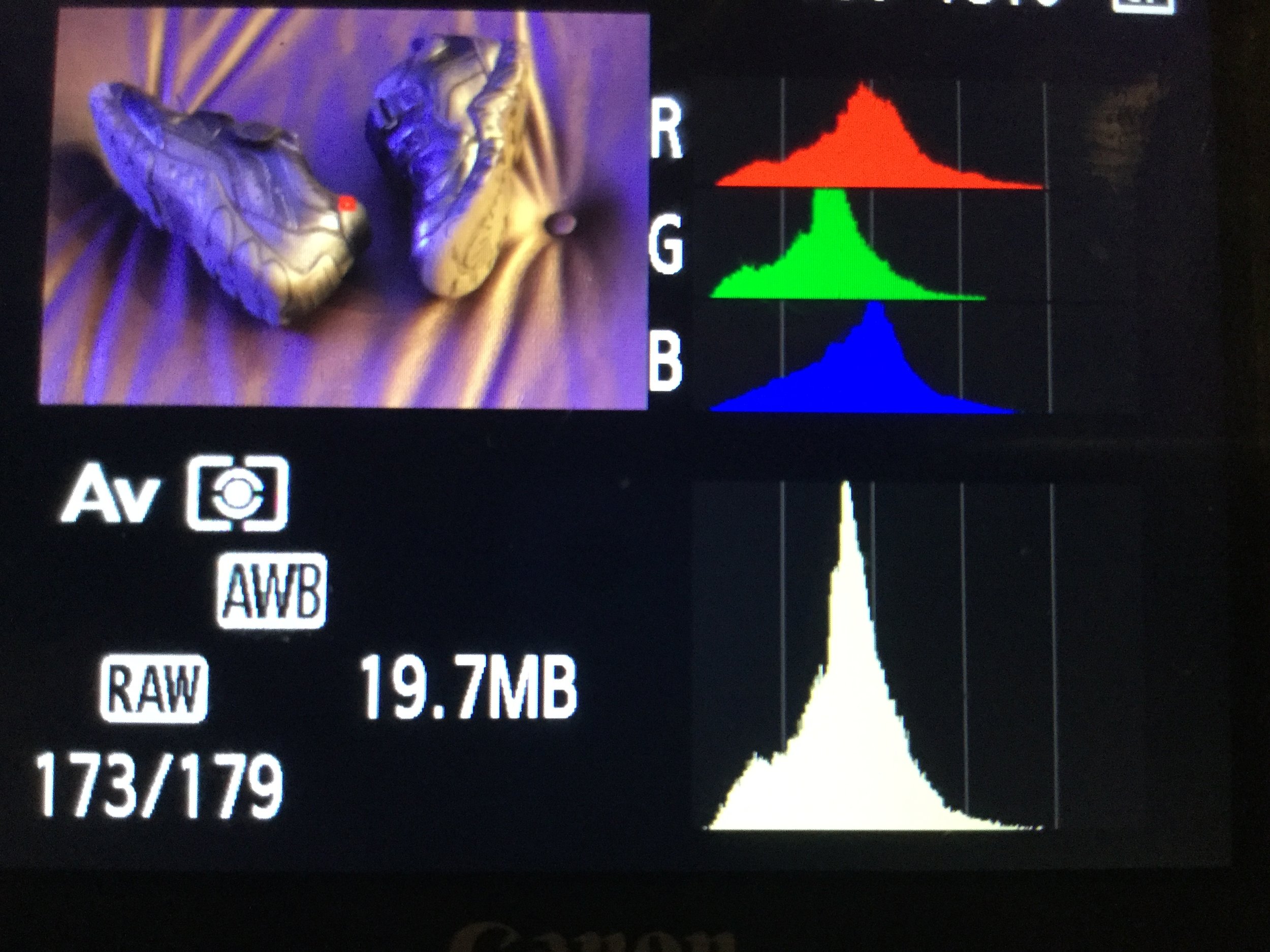

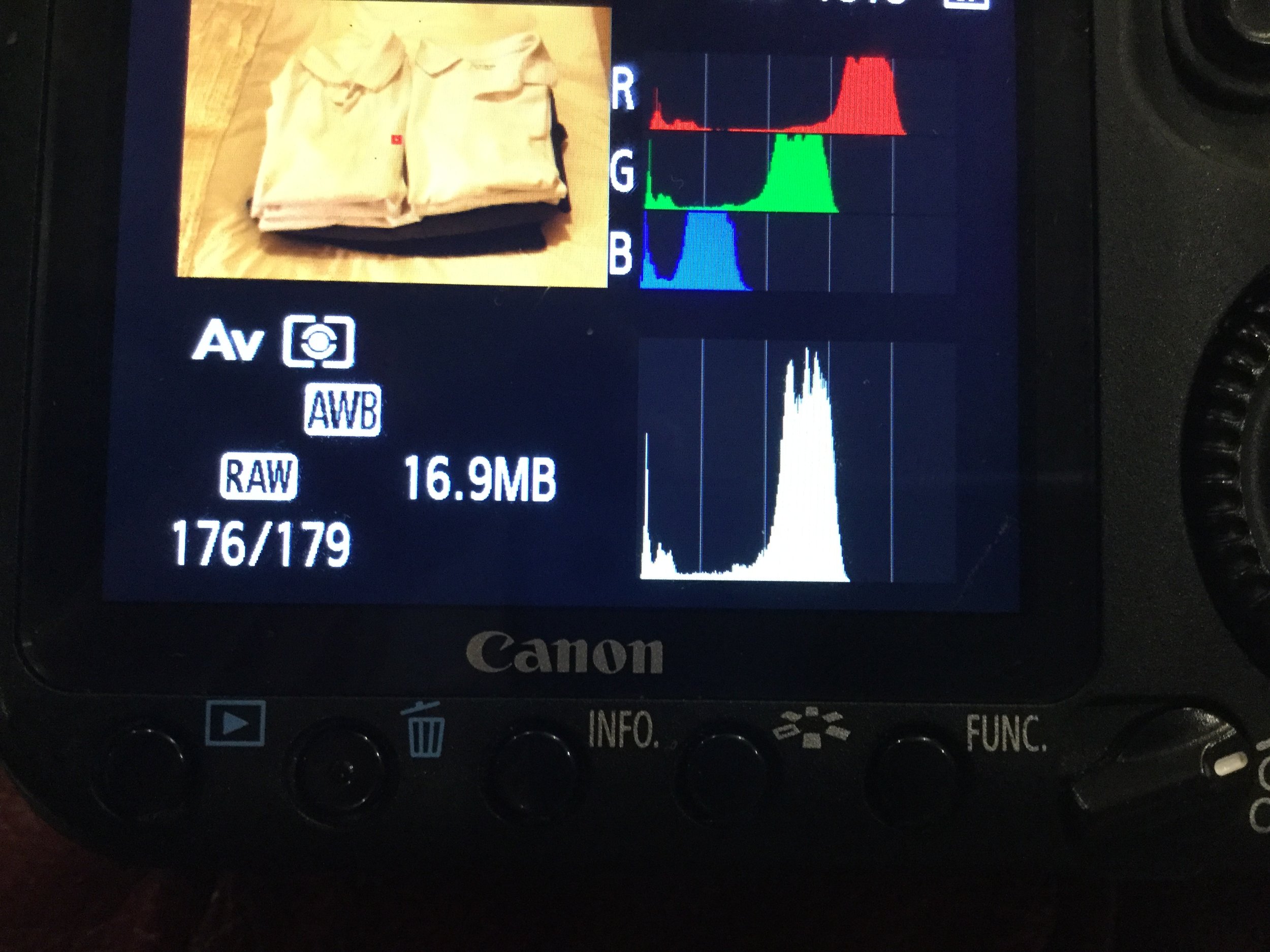

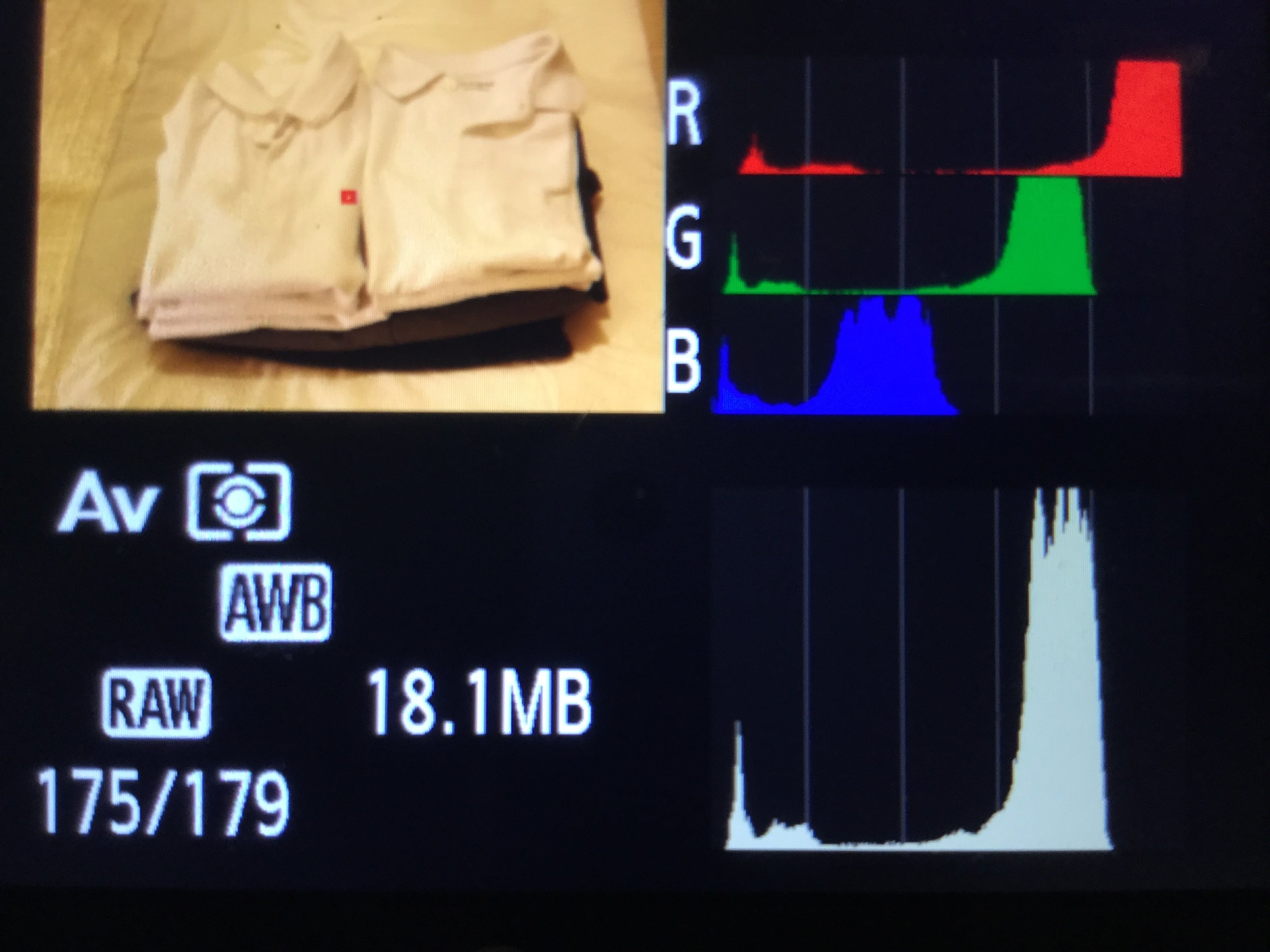

The DeepDream algorithm was produced as an adaption of a computer program called Inception. Inception is a neural network developed to detect common patterns in images, commonly used in face recognition but has the capability to distinguish and group wider common features within imagery. The neural network is developed by running a unsupervised classification over a large dataset of photographs. The technique produces multiple nodes (neurons) which each represent a grouping of visually similar image characteristics. Once trained, the network can also used to ‘back propogate’. DeepDream is the process of running the trained neural network in reverse. Instead of recognizing and classifying all elements within an image, the program is asked to adjust an image slightly so that classified elements conform slightly closer to those previously learned. A process of continually asking the program to iteratively reprocess the image has the effect of morphing the image into the elements the algorithm recognises. My contact sheets show the development of these images.

Reflection and Further Development

As part of the submission for this exercise, I have the following additional reflections; and in addition how I may develop the series.

· Critique – I mentioned that I wanted to challenge myself in this assignment in trying something different. I still have mixed emotions about the level of digital manipulation I have used, even in the context outlined. My other concerns is around how derivative the work is, the DeepDream process had a wave of popularity in mid-2015 when Google released the code as open source. On the positive side I am pleased that the outcome is different than the alternative study of a single object from different angles.

· Further Development – If I was to develop this series further I would like to take back some of the control over the image manipulation. The algorithm I used was the same as the one trained by the original developers. One of the criticisms of this was that there was an over-representation of certain image types (e.g. dogs) in the original data set. Part of the way to improve this would be to retrain the algorithm on a new image base which would also make the outcome more original. However, this would mean developing a coded solution; I used an online service in this exercise.